Most companies today invest in customer research, and not on a small scale.

According to ESOMAR, the global insights and market research industry now exceeds $140 billion in annual revenue, with tens of billions spent each year specifically on market and consumer research. Studying customers has become a mainstream, well-funded practice rather than a niche capability.

I’ve worked across very different organisational contexts: early-stage startups, scaling companies, and large corporations. Unsurprisingly, the way research is done varies widely.

In some teams, it’s handled by a single generalist. In others, companies rely on external agencies or build dedicated in-house research units. The approach usually reflects budget, maturity, and internal structure.

On the surface, everything looks right.

And yet, this is where a quiet contradiction begins to emerge.

Despite significant investments in understanding customers, many strategic initiatives never make it very far. Strategy changes are often filtered out early. Sometimes there isn’t enough evidence to defend them convincingly. Sometimes the timing feels wrong. And often, managers are simply reluctant to take on the risk of changing direction – even when the data points in that direction.

What makes this particularly challenging is that the breakdown rarely happens at the execution stage. It happens much earlier, before a strategic shift is ever fully committed to.

When I talk about strategic decisions, I don’t mean incremental optimisations or campaign-level tweaks. I’m referring to choices that fundamentally shape how a company shows up in the market: redefining the ideal customer profile, changing the primary go-to-market motion, rethinking the core value narrative, or deciding which growth levers the business will deliberately stop prioritising.

These are exactly the decisions teams hope customer research will support.

In practice, however, research more often informs activity than direction. It produces knowledge, context, and validation, but not commitment. From the outside, it appears that research is happening and strategy is being discussed. From the inside, the link between the two is fragile and often breaks long before any meaningful change reaches the market.

Where research breaks in real teams

When customer research fails to influence strategy, it rarely breaks in one dramatic moment. More often, it erodes quietly across a few predictable fault lines – ones I’ve seen repeat themselves across companies of very different sizes and levels of maturity.

None of these reasons is surprising. Most PMMs will recognise them immediately, and we’ve all seen these dynamics play out, often more than once.

1. Teams measure what’s easy, not what’s decision-relevant

Most research systems are built around methods that are familiar, defensible, and easy to operationalise: surveys, interviews, usability tests, A/B experiments. These approaches are valuable, but they tend to privilege what customers can articulate over what actually drives decisions.

As a result, teams become very good at explaining attitudes and preferences – and far less confident when it comes to predicting behaviour under real-world conditions, where risk, emotion, and trade-offs matter. Over time, this creates a subtle bias: insights feel informative, but not strong enough to justify strategic change.

2. No one truly owns synthesis

In many organisations, research exists, but synthesis doesn’t.

Findings live in decks, documents, and dashboards, often owned by different functions. Product, marketing, and research teams each interpret insights through their own lenses, but no one is explicitly responsible for translating them into strategic implications.

In one of my previous roles, multiple high-quality studies pointed to the same underlying customer tension. Product teams saw it. Marketing saw it. Research documented it thoroughly.

What was missing wasn’t evidence – it was ownership. No one felt responsible for turning that shared understanding into a clear strategic recommendation. The insight stayed visible, but inactive.

3. Speed outpaces research cycles

Another common friction point is timing.

Product launches, competitive pressure, and revenue targets rarely wait for research to reach perfect clarity. When decisions need to be made quickly, research often arrives either too late or too fragmented to be persuasive.

I’ve seen teams acknowledge that an insight was “probably right”, while still moving forward without it, simply because the moment to act had already passed. The issue wasn’t disbelief. It was a misalignment between research cadence and decision timelines.

This doesn’t mean speed kills research. It means slow, sequential research models struggle to survive in fast-moving environments – especially when insights are not framed in a way that supports timely decisions.

4. Strategic risk feels higher than evidence

Finally, even when insights are strong, changing strategy feels risky.

Shifting an ICP, altering a go-to-market motion, or reframing the core narrative carries visible consequences. Without a clear way to connect research findings to these high-stakes choices, managers tend to default to caution.

In another case I worked on, the data clearly suggested that the current target segment was no longer the strongest growth opportunity. The insight was acknowledged and then quietly set aside. The perceived risk of changing direction outweighed the confidence teams had in defending the recommendation.

This is rarely about intent. More often, it’s about how the system is set up. And it’s why simply “doing more research” rarely fixes the problem.

Why more research doesn’t fix the problem

When we look at the challenge from a distance, it’s easy to land on what feels like the natural solution: just do more research.

If customer research doesn’t move strategy, then the instinct is to ask for more interviews, longer surveys, bigger samples, more tools, bigger budgets, or a dedicated team. I’ve heard that sentence in boardrooms and brainstorms alike.

And yet, almost paradoxically, this rarely solves the real problem.

It’s not that more research is a bad thing. It’s that without a clear mechanism for turning research into direction, all those additional studies just produce more insights that never quite change what a team actually does.

I’ve been in situations where expanding the research function felt like progress: we had more data, more reports, and even more visibility on customer behaviour.

But when it came time to make strategic decisions – should we pivot our positioning? Shift our core segment? Change our go-to-market motion? The conversation rarely gravitated toward the research findings. Instead, it skidded into debates about risk appetite, timing, or leadership preference.

What’s missing isn’t the quantity of insight. What’s missing is the orientation of the process.

Most research systems are simply optimised to answer: what happened? or why might this be happening? And these are valuable questions. But strategy demands a different kind of input: what should we actually change because of this? And are we confident enough in that insight to put a strategic bet on it?

When that distinction isn’t clear, research becomes upstream noise rather than a strategic lever. You can generate more and more insights and still end up with the same strategic inertia because none of them were framed in a way that helps a leader say yes instead of let’s wait and see.

So the real barrier isn’t a lack of ideas. It’s a lack of structure around how insights are translated into choices.

And that distinction between interesting versus decision-relevant insights is where the next shift must happen.

What changes when PMMs approach research differently

At first glance, it might seem that the answer to research not impacting strategy is simply “do better research.” But if that were true, we would see almost every company that invests in studies making clearer strategic decisions. And we don’t.

Most customer research doesn’t fail because it is inaccurate. It fails because it is not decision-ready. It tells us what happened, sometimes even why people say they behave a certain way, but it rarely tells us what should change because of it.

In strategic literature, there has long been a distinction between:

- Descriptive insight

- Decision-support insight

And most customer research still lives firmly in the first category.

Descriptive insight helps us understand what users notice, what they complain about, or what they prefer. It helps explain attitudes and perceptions. But it does not automatically answer the harder and riskier question: “Should we adjust our strategy because of this?”

Put simply, research often produces accurate findings. What it rarely produces is decision clarity.

This gap exists not because research methods are inherently flawed, but because many research systems are optimised for explainability – producing clean, internally coherent stories – rather than for predictive validity: the ability to reliably forecast what customers will actually do next.

It is tempting to assume that more advanced approaches, such as neuromarketing or consumer neuroscience, will solve this problem. These methods aim to capture subconscious and emotional responses that traditional surveys and interviews often miss, and in many cases, they do offer a deeper view into pre-conscious drivers of behaviour.

A 2024 study published by Springer showed that EEG-based neuromarketing data, when combined with machine-learning models, could effectively predict unconscious buying behaviour, outperforming some traditional approaches in identifying preference patterns.

Likewise, an overview from Harvard Professional Education describes neuromarketing as a discipline designed to forecast consumer decisions by analysing physiological and cognitive responses, not just stated opinions.

A recent review in Frontiers in Neuroergonomics also documents the growing use of neuroscience techniques across the consumer decision journey, linking emotional and cognitive signals to stages of choice.

These findings are important, but they don’t change the core challenge.

Even the most sophisticated behavioural methods won’t move the strategy on their own. They can surface signals that were previously invisible, but unless those signals are explicitly tied to real business choices. Who to target, how to position, and where to invest remain interesting descriptions rather than strategic guidance.

What truly changes when PMMs approach research differently is not the volume of insight, but the discipline applied to synthesis and framing.

This shift requires PMMs to operate less as insight curators and more as decision architects. It also requires acknowledging a difficult truth: not every validated insight deserves to shape strategy.

These aren’t theoretical questions; they usually come up when teams are already under pressure to decide.

- Does this insight reduce uncertainty around a high-stakes decision?

- Does it predict behaviour that materially affects the business?

- If we act on it, what becomes harder to reverse?

This move, from curiosity to consequence, is subtle but critical. It marks the difference between research that lives in decks and research that genuinely reshapes direction.

Behavioral and decision science helps here not because it replaces traditional research, but because it forces a higher bar. It pushes teams to look beyond explanation and toward prediction, beyond narratives that sound right, and toward signals that reliably forecast real decisions.

When research is framed this way, it becomes more than information. It becomes a strategic signal: one that leaders can weigh, challenge, and ultimately act on with confidence.

When research exists, but the strategy doesn’t move

Scenario 1: Research happens. Strategy stays the same.

This is the most common, and often the most frustrating, scenario.

The company invests in customer research. Interviews are conducted. Surveys are launched. Usability tests and experiments are run. In some cases, a researcher is hired, or an external agency is brought in. On paper, the organisation is “doing research right.”

And yet, when strategic conversations happen about positioning, go-to-market motion, or which segment to prioritise, research rarely plays a decisive role.

What this looks like in practice:

- Insights arrive fragmented, often tied to specific initiatives or teams

- Findings describe attitudes, preferences, and reactions

- Different studies point in slightly different directions

- No clear ranking exists between “interesting” and “strategically consequential” insights

PMMs are left synthesising slides rather than shaping decisions. Research becomes something to reference rather than something to act on.

Why doesn’t it move?

- Insights explain what customers say or feel

- They are not tested for their ability to predict real behaviour

- They are not explicitly connected to irreversible choices

As a result, research feels informative but non-binding. It supports execution messaging tweaks, UX adjustments, campaign ideas but stops short of influencing strategy.

Scenario 2: Research becomes a strategic input

In the second scenario, the amount of research conducted may be similar but the role it plays is fundamentally different.

Here, PMMs take ownership not just of insight delivery, but of synthesis and decision framing.

Instead of treating all validated findings as equal, PMMs introduce discipline:

- Insights are ranked, not just summarized

- Each insight is evaluated against its strategic consequences

- Research outputs are framed around decisions the business must make

The key shift is simple but powerful: research is no longer presented as “what we learned,” but as “what this allows us to decide with more confidence.”

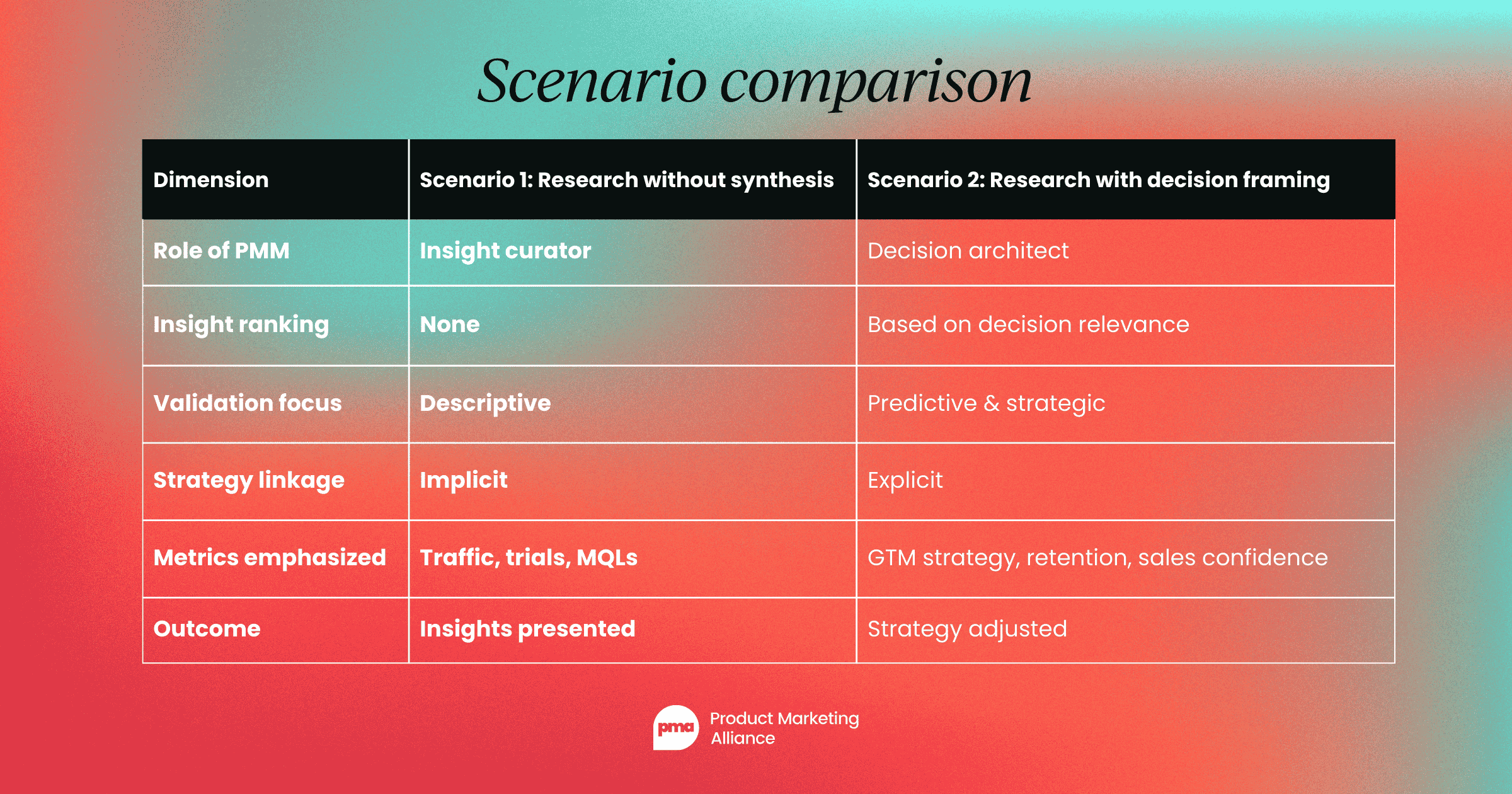

Scenario comparison

From insight accumulation to decision lenses

Once the contrast between these two scenarios becomes visible, the next question is inevitable: how do senior PMMs consistently tell the difference?

In practice, this is where many teams get stuck. They sense that some insights feel more “strategic” than others, but lack a shared way to articulate why. As a result, decisions default to intuition, hierarchy, or timing rather than disciplined synthesis.

What changes in teams where research does influence strategy is not a new method of data collection, but the introduction of decision-oriented lenses – explicit criteria that insights must pass before they are allowed to inform high-stakes choices.

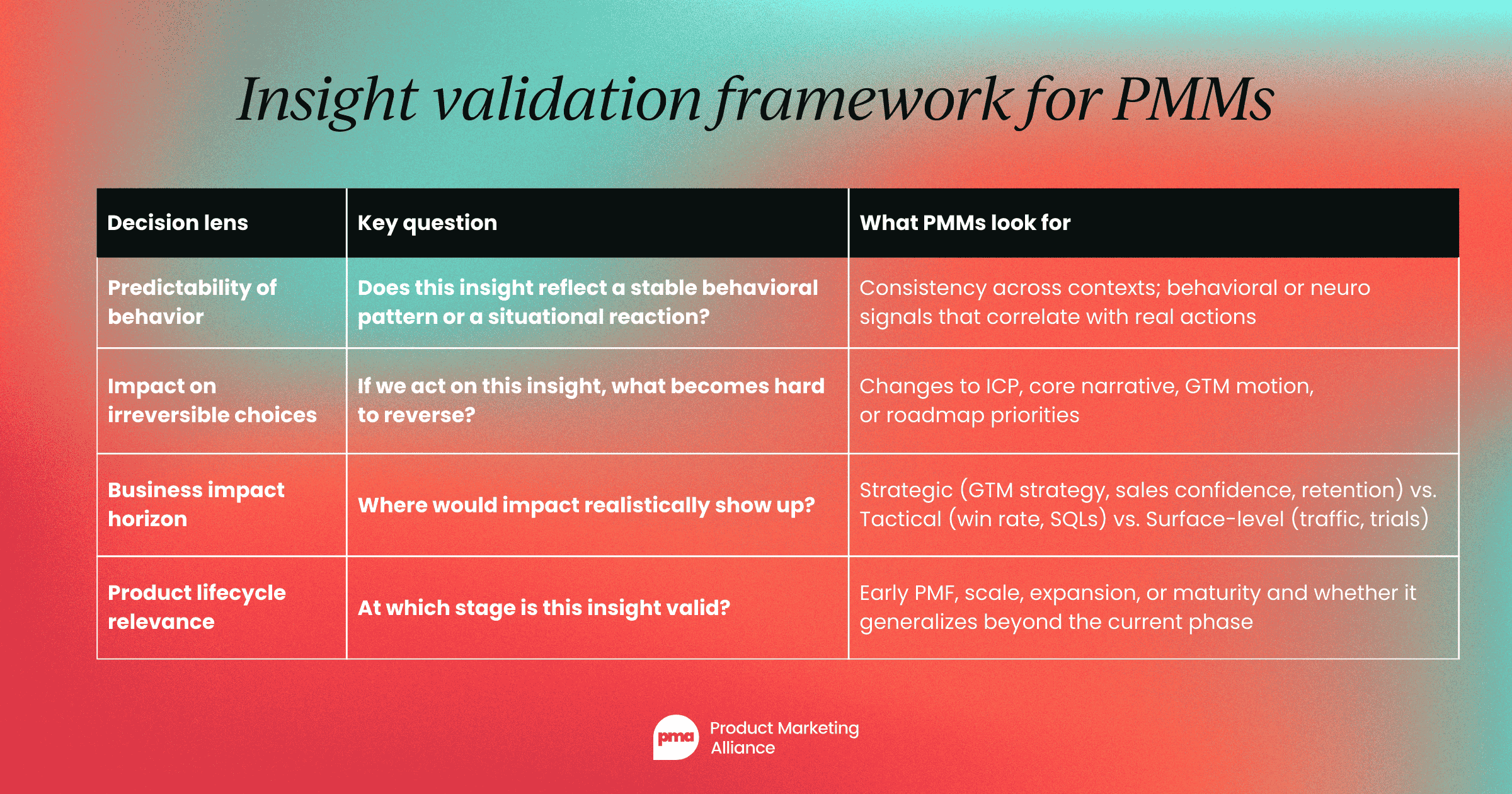

Insight validation framework for PMMs

What they provide is something far more valuable: discipline. They make synthesis explicit rather than implicit. They replace intuition with shared language. And they allow PMMs to move from presenting findings to framing choices: from explaining customers to helping the business commit.

This is the moment where customer research stops being a collection of observations and becomes a strategic input. Not because it is exhaustive, but because it is selective.

Conclusion

Across companies of all sizes, the same pattern repeats: research generates understanding, but decisions still default to caution, precedent, or timing. The gap isn’t in data quality; it’s in how insights are translated into choices the business is willing to commit to.

This is where the role of the PMM becomes decisive. Not as someone who produces or presents insights, but as someone who takes responsibility for synthesis, prioritisation, and framing. Someone who is willing to say: this matters strategically, and this doesn’t, at least not now.

Not every validated insight deserves to shape strategy. But the ones that do require clarity, courage, and structure to be acted on. And that shift is less about better research and more about better ownership.

For an industry that invests billions in understanding customers, the real challenge is no longer learning more but deciding what to do with what we already know.

Follow us on LinkedIn

Follow us on LinkedIn

.svg)

Start the conversation

Become a member of Product Marketing Alliance to start commenting.

Sign up now