For years, product marketing has been a discipline defined by trade-offs. We’ve had to choose between the richness of qualitative feedback and the scale of quantitative data. Historically, analyzing free-text responses at scale was so slow, manual, and messy that we were often advised to avoid open-ended questions entirely.

But the "ceiling" of what’s possible in our role has shifted. Being an AI-native PMM means both replacing your intuition with an algorithm and using AI to remove the practical barriers that once limited your strategic impact.

When used well, AI helps you move faster, see patterns sooner, and connect dots across sources that would otherwise remain isolated.

The refreshed Product Marketing Certified: Core curriculum is designed to help you navigate this transition, ensuring you stay in the driver's seat while AI handles the heavy lifting.

Research: Scaling the "unscalable"

The traditional reliance on closed-ended questions and rating scales was a compromise born of necessity. Nowadays, AI allows you to include open-ended questions alongside quantitative ones and cluster responses in minutes rather than days. You no longer have to choose between depth and volume.

The power of synthesis

In most organizations, customer signals are scattered: CRM notes, Slack threads, sales call summaries, and support tickets.

Taken individually, these appear anecdotal; collectively, they’re a goldmine. AI enables you to analyze these fragments together, surfacing emerging risks or opportunities that no single team would identify in isolation.

This mirrors how buyers actually experience your company – as a series of interactions rather than neat datasets.

- Actionable tip: Use AI to uncover the language customers naturally use in interviews, then deploy surveys with both scaled questions and open-text follow-ups to see not only what people feel, but also why they feel that way.

- The competitor unlock: Use AI to track movement on competitor websites. Remember, a messaging change provides a signal, but the interpretation of that signal still sits firmly with you.

- The accountability guardrail: If AI-generated analysis is wrong, nothing happens to the model. If you act on it uncritically and make a bad call, the impact lands on you. AI outputs are inputs into decision-making, not the decision itself.

Dynamic personas vs. static documents

The challenge in persona work is a complete inundation of data. Insights are often buried in a mountain of transcripts and docs. AI is changing how this work gets done by reducing the friction between collecting insight and turning it into something usable.

Moving beyond generalizations

Traditionally, persona development required a small set of interviews and manual tagging that took days. AI now allows PMMs to review far larger volumes of qualitative input without defaulting to surface-level generalizations.

- Pressure-testing: Use AI to identify where existing persona assumptions no longer hold. For example, if a persona is defined as price-sensitive but recent deal notes emphasize ease of implementation, that’s a signal worth investigating.

- Persona differentiation: In complex B2B environments, roles often blur. AI helps identify subtle differences in language, priorities, and objections across roles by analyzing how different groups discuss the same problem.

- Activation over creation: AI can help translate persona insights into tailored messaging variations or sales prompts. This improves relevance without creating fragmentation.

Positioning: Finding clarity in chaos

Positioning work pulls in raw ingredients from everywhere: win/loss notes, competitor claims, and sales objections. These inputs are often buried in messy docs or CRM fields that no one fills in properly. AI acts as the "first-pass analyst" that pulls this chaos into common themes.

Exploring the options

The risk in positioning isn’t usually that you have no good story; it’s that you pick one without properly seeing the others. AI makes it easy to generate multiple plausible directions – different frames of reference or "so-what" outcomes – so you can compare them side-by-side.

- The "buyer mirror": Feed a draft pitch into an AI and ask it to reflect back what it thinks the problem solved is. If the mirror comes back fuzzy, your words aren't doing enough work yet.

- Differentiation testing: Give AI a set of competitor claims and your draft narrative and ask, "What would a skeptical buyer say is meaningfully different here?"

- Consistency audits: AI can spot when messaging has quietly drifted away from positioning. It can flag jargon creep or contradictions across assets, allowing you to update the single source of truth.

GTM scenario planning and signal detection

One of the biggest challenges in GTM planning is the sheer volume of variables: segments, channels, and timelines. AI is particularly strong at handling this complexity.

Strategic GTM design

Example of generic vs. differentiated AI outputs for a PMM

Positioning statement for a B2B data analytics platform

The first output could describe Tableau, Looker, Domo, or a dozen others. The second could only describe your product, in your customer's language.

Scenario: Launch email for a new feature

Generic input: "Write a launch email for our new reporting feature."

We're excited to announce a powerful new reporting feature that gives you deeper insights into your data. With customizable dashboards and real-time metrics, you'll make better decisions faster...

Differentiated input: "Write a launch email. The new feature is scheduled reports that auto-send to stakeholders every Monday morning. Our buyers are RevOps managers who told us in research that they spend 2+ hours every week manually exporting and emailing reports to their CRO. One customer said, 'I feel like a glorified Excel monkey.' Tone should be direct and a little irreverent – that's our brand voice."

Every Monday, thousands of RevOps managers export the same report, paste it into an email, and hit send. Again. We just automated that for you. [Feature] sends your CRO the metrics they want, every Monday at 7am, without you touching a thing...

The pattern across both examples

The generic outputs fail in a specific way: they describe a category of product, not your product. The differentiated outputs work because they're built from three ingredients AI can't invent on its own:

- Customer language: The actual words your buyers use to describe their pain ("flying blind," "Excel monkey")

- Specific mechanism: The precise thing your product does differently (carrier API vs. CSV exports)

- Context about who cares: The exact role, company size, and moment that makes this relevant

AI is a transformation engine. It can reshape, refine, and scale your inputs – but it cannot manufacture the specificity that makes marketing land. That raw material has to come from you: from customer interviews, win/loss analysis, sales call recordings, and your own product knowledge.

The PMMs who get the most out of AI are the ones who treat prompt-writing as a documentation exercise – forcing themselves to articulate the specific, differentiated truths about their product before the AI ever touches it.

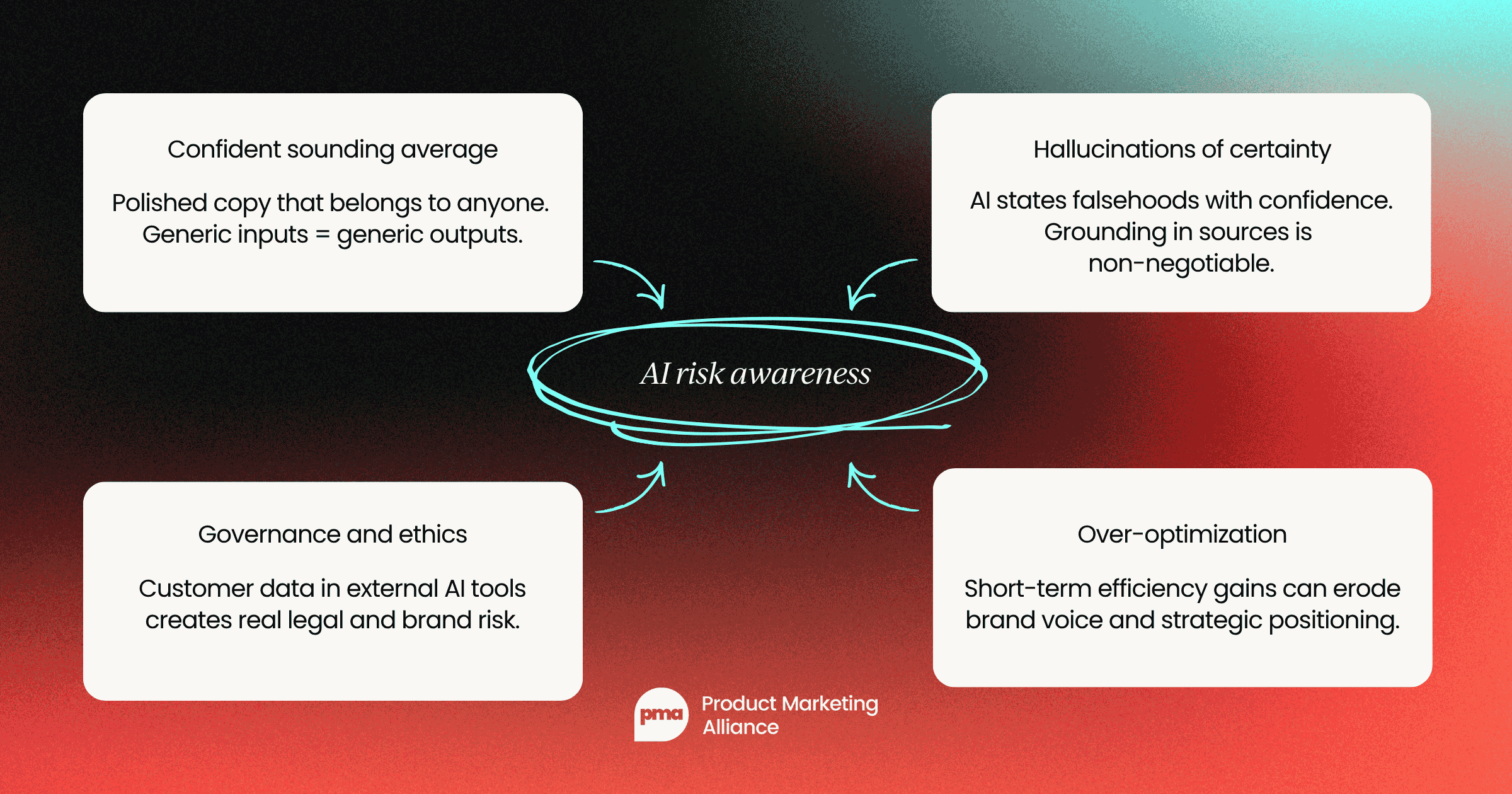

Navigating the AI risks

To be an expert AI-native PMM, you must recognize the pitfalls that "shallow" users ignore. Understanding these risks means being the person on the team who gets better outputs because you understand the failure modes.

Risk 1: The "confident-sounding average"

AI models are trained to produce fluent, coherent text, which means they're exceptionally good at generating output that sounds like good marketing copy.

The problem is that "sounds good" and "is differentiated" are completely different things. If you prompt with vague, category-level inputs ("write a positioning statement for our data analytics platform"), you'll get output that could describe any of your competitors just as accurately as it describes you.

The fix is specificity in your inputs. Feed the AI the things only you can know: verbatim quotes from your best customers, your specific contrarian product bets, the precise language your CEO uses in board meetings, the use cases that close deals against a specific competitor. Your differentiation lives in the raw material, not in the prompt.

Risk 2: Hallucinations of certainty

Unlike a human who might hedge with "I think" or "I'm not sure," AI models often state inaccurate information in the same confident register they use for established facts. In a product marketing context, this is particularly dangerous for competitive intelligence. AI can fabricate competitor feature claims, attribute quotes to executives who never said them, or confuse version histories.

The mitigation here is architectural: always ground competitive research in a closed set of sources.

Your prompt should make this explicit: "Only use the information in these three earnings call transcripts. Quote the exact phrase from the source. If you're uncertain, say so." Forcing the model to cite exact sources both improves accuracy and makes hallucinations easier to catch in review.

Risk 3: Governance and ethics

Most organizations have strict policies – often legally required – about what data can be processed by external AI services. Customer PII, unpublished financial data, and confidential product roadmaps almost certainly fall into this category.

The enthusiasm to move fast with AI can create real compliance and reputational exposure if those controls aren't respected.

Best practice means knowing which tools are approved by your legal and security teams before you need them, understanding what data anonymization is required for specific use cases, and defaulting to internal or enterprise-licensed models when in doubt.

Risk 4: Over-optimization

AI excels at optimizing for measurable short-term signals: click-through rate, time-on-page, email open rates. The risk is that optimizing relentlessly for these metrics – letting AI continuously A/B test and refine toward performance – can quietly erode the distinctive brand voice and strategic positioning that differentiates you over years, not quarters.

The guardrail is maintaining a clear separation between efficiency decisions (where AI should optimize freely) and strategy decisions (where human judgment governs). Let AI optimize the subject line; don't let it quietly rewrite your brand narrative one high-CTR headline at a time.

Elevate your role with Product Marketing Certified: Core

The Product Marketing Certified: Core release is built for the PMM who wants to be an architect of strategy. We teach you how to treat AI as a thinking partner to reduce grunt work, not to outsource your accountability.

Follow us on LinkedIn

Follow us on LinkedIn

.svg)

Start the conversation

Become a member of Product Marketing Alliance to start commenting.

Sign up now